Introduction

Face recognition is a fascinating area of computer vision that involves identifying and verifying individuals based on their facial features. In this project, we'll build a simple face recognition system using the OpenCV library in Python.

Project Overview

The project is structured into three primary steps:

1. Collecting Face Samples:

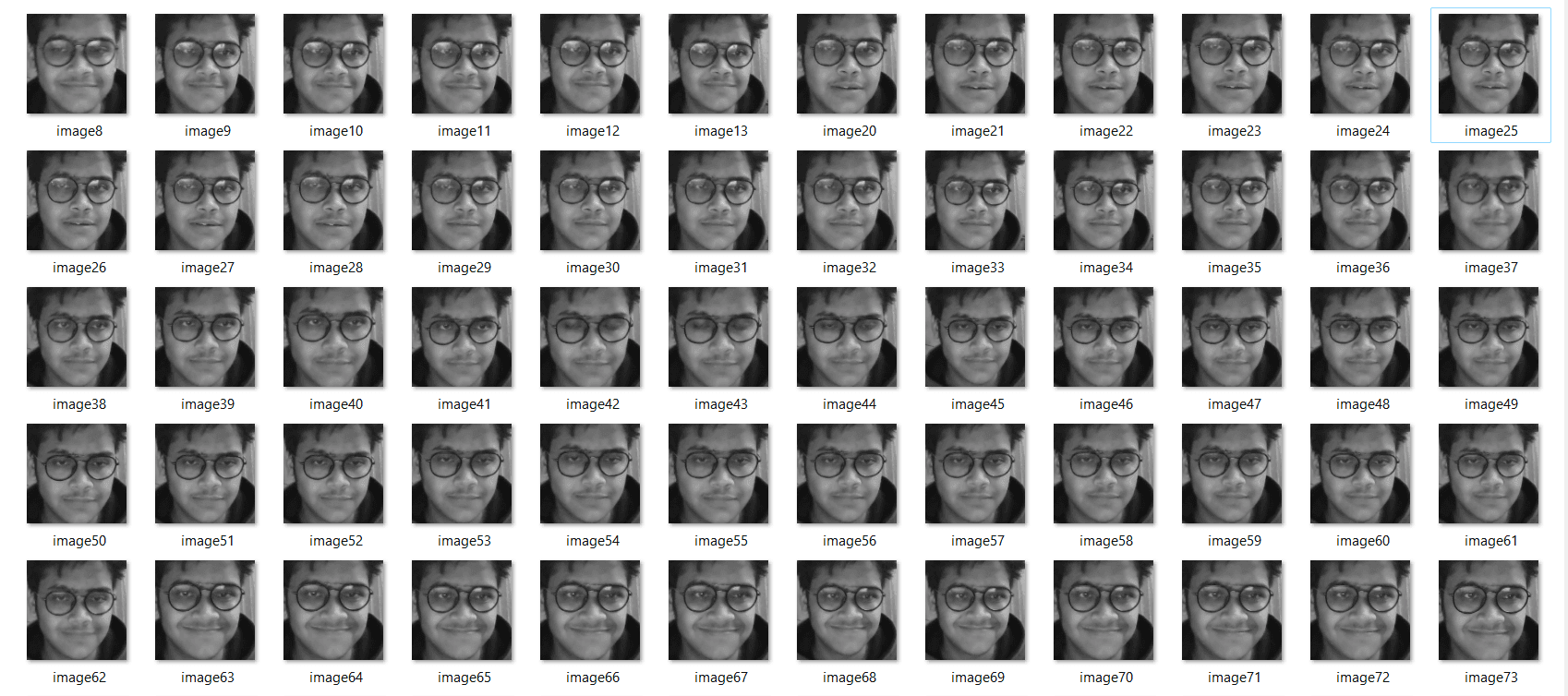

We start by capturing face samples using the computer's webcam (we use OpenCV library to capture pictures). The user is prompted to enter their name, and the system captures 100 grayscale face images, storing them in a specified directory for subsequent training.

2. Training the Face Recognition Model:

Once we have a dataset of face samples, we train a face recognition model using the LBPH (Local Binary Pattern Histogram) algorithm provided by OpenCV. This model learns to recognize the unique features of each individual's face. steps involved :

- Loading the collected face samples.

- Training a LBPH (Local Binary Pattern Histogram) face recognition model using OpenCV.

3. Real-Time Face Recognition:

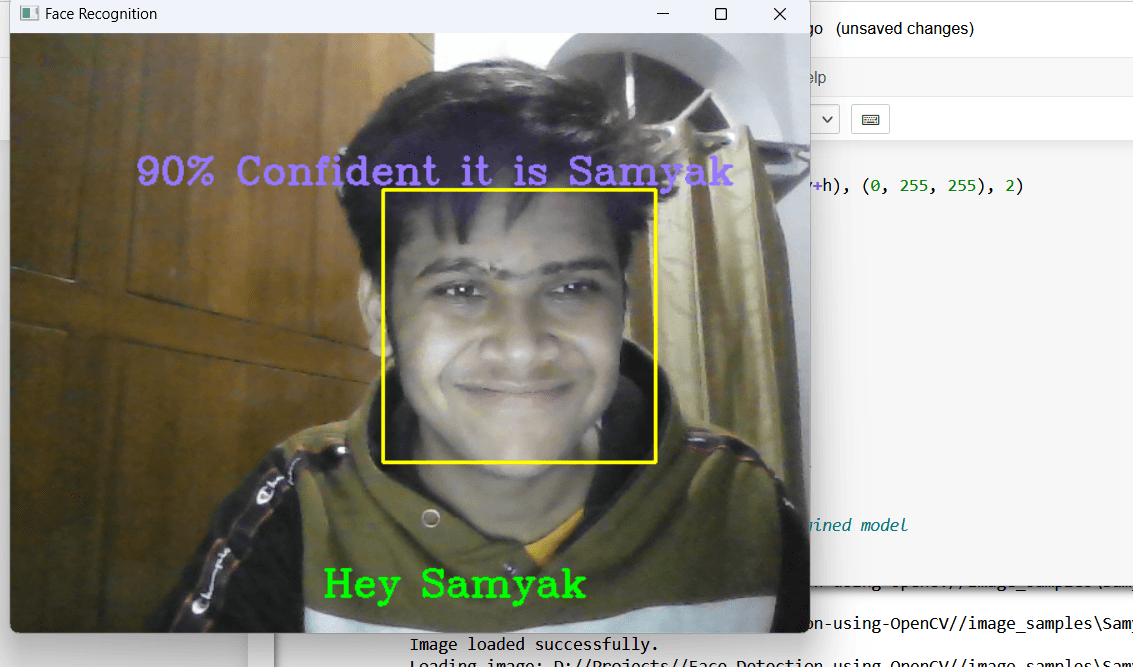

Now with the trained model, we implement a real-time face recognition system. The system continuously captures frames from the webcam, detects faces, and uses the trained model to predict the identity of the user. If the confidence level is above a certain threshold, the system greets the user by name.

Prerequisites:

Before we delve into each section of the code to understand the implementation details, Here are some prerequisites:

1. Install Required Libraries:

Make sure you have Python installed on your system. Install the necessary libraries using the following command:

pip install opencv-python numpy

2. Download the Haar Cascade Classifier XML file:

Download the 'haarcascade_frontalface_default.xml' file from the OpenCV GitHub repository or use the provided one.

3. Adjust File Paths:

Update the file paths in the code according to your project structure.

Section 1: Importing Libraries and Loading Haar Cascade Classifier

import cv2 # OpenCV library for computer vision tasks

import numpy as np # NumPy library for numerical operations

import os # OS library for file handling

import sys # Sys library for system-specific parameters and functions

# Load Haar Cascade Classifier for face detection

face_classifier = cv2.CascadeClassifier('haarcascade_frontalface_default.xml')

In this section, we import the necessary libraries, including OpenCV and NumPy. The Haar Cascade Classifier is loaded to detect faces in images.

Section 2: Face Extraction Function

def face_extractor(img):

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) # Convert image to grayscale

faces = face_classifier.detectMultiScale(gray, 1.3, 5) # Detect faces

if len(faces) == 0:

return None

for (x, y, w, h) in faces:

cropped_face = img[y:y+h, x:x+w] # Extract face region

return cropped_face

The face_extractor function takes an image as input, converts it to grayscale, and then uses the Haar Cascade Classifier to detect faces. If a face is found, it extracts and returns the face region.

Section 3: Collecting Face Samples

def collect_face_samples(name, data_path='D://Projects//ML Code//Computer Vision//ML-workshop-ec2-CV-security-master//samyak'):

cap = cv2.VideoCapture(0)

count = 0

if not cap.isOpened():

print("Error: Camera not accessible")

return

while True:

ret, frame = cap.read()

if face_extractor(frame) is not None:

count += 1

face = cv2.resize(face_extractor(frame), (200, 200))

face = cv2.cvtColor(face, cv2.COLOR_BGR2GRAY)

file_name_path = os.path.join(data_path, name + '_image' + str(count) + '.jpg')

cv2.imwrite(file_name_path, face)

cv2.putText(face, str(count), (50, 50), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 255, 0), 2)

cv2.imshow('Face Cropper', face)

else:

print("Face not found")

pass

if cv2.waitKey(1) == 13 or count == 100:

break

cap.release()

cv2.destroyAllWindows()

print("Collecting Samples Complete")

The collect_face_samples function captures face samples from the webcam. It continues to capture images until either 100 images are collected or the user presses Enter. The collected images are saved to the specified data path.

Section 4: Training Face Recognition Model

def train_face_recognition(data_path='D://Projects//ML Code//Computer Vision//ML-workshop-ec2-CV-security-master//samyak'):

onlyfiles = [f for f in os.listdir(data_path) if os.path.isfile(os.path.join(data_path, f))]

Training_Data, Labels = [], []

for i, file in enumerate(onlyfiles):

image_path = os.path.join(data_path, file)

print("Loading image:", image_path)

images = cv2.imread(image_path, cv2.IMREAD_GRAYSCALE)

if images is not None:

Training_Data.append(np.asarray(images, dtype=np.uint8))

Labels.append(i)

print("Image loaded successfully.")

else:

print("Error loading image:", image_path)

print("Number of training images loaded:", len(Training_Data))

Labels = np.asarray(Labels, dtype=np.int32)

model = cv2.face.LBPHFaceRecognizer_create()

model.train(np.asarray(Training_Data), np.asarray(Labels))

print("Model trained successfully")

return model

The train_face_recognition function reads the collected face images, converts them to grayscale, and prepares the training data. It uses the LBPH Face Recognizer to train the model on this data. The trained model is then returned for use in real-time face recognition.

The LBPH (Local Binary Pattern Histogram) algorithm is a texture-based approach for facial recognition. It operates on grayscale images and is based on Local Binary Patterns (LBP). It is commonly used for face recognition tasks due to its simplicity and effectiveness, especially in the context of small to medium-sized datasets.

In the context of our face recognition system, LBPH is utilized to capture and learn the unique facial features of individuals. The training process involves creating histograms from the grayscale face samples, and the resulting model can then predict the identity of a person based on their facial characteristics.

Section 5: Recognizing Faces in Real-Time

def recognize_face(model, name, confidence_threshold=80):

cap = cv2.VideoCapture(0)

if not cap.isOpened():

print("Error: Camera not accessible")

return

while True:

ret, frame = cap.read()

image, face = face_extractor(frame)

try:

face = cv2.cvtColor(face, cv2.COLOR_BGR2GRAY)

results = model.predict(face)

if results[1] < 500:

confidence = int(100 * (1 - (results[1])/400))

display_string = f"{confidence}% Confident it is {name}"

cv2.putText(image, display_string, (100, 120), cv2.FONT_HERSHEY_COMPLEX, 1, (255, 120, 150), 2)

if confidence > confidence_threshold:

cv2.putText(image, f"Hey {name}", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 255, 0), 2)

else:

cv2.putText(image, "I don't know", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('Face Recognition', image)

except Exception as e:

print(f"Error Recognising Face: {e}")

cv2.putText(image, "Error Recognising Face", (130, 120), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.putText(image, "Locked", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('Face Recognition', image)

if cv2.waitKey(1) == 13:

break

cap.release()

cv2.destroyAllWindows()

The recognize_face function captures frames from the webcam, detects faces, and then uses the trained model to recognize the faces in real-time. The confidence level is displayed, and the system responds with a personalized message if the confidence is above a certain threshold.

Error Handling Explanation

In the "Recognizing Faces in Real-Time" section, an exception handling block has been included to address potential errors during the real-time recognition process. Let's explore why this block is necessary:

1. Face Recognition Error:

The try block attempts to recognize faces in real-time using the trained model. However, various issues can arise during this process, such as incomplete face detection or unexpected image formats. The except block is designed to handle these errors.

2. Handling Exceptions:

The except block catches any exceptions that occur during face recognition and provides a controlled response. It prints an error message to the console, updates the display to indicate the recognition error, and continues the loop. This ensures that a single recognition failure doesn't interrupt the entire real-time recognition process.

3. User Feedback:

By displaying an error message and locking the system temporarily, the user is informed about the recognition error. This enhances the user experience and prevents the system from behaving unpredictably in the presence of unexpected issues.

UI Enhancement

The face recognition system provided here is a basic implementation. You can enhance and customize it based on your requirements. Here are a few ideas:

1. Graphical User Interface (GUI):

Create a GUI to provide a more visually appealing and user-friendly interaction. Libraries like Tkinter, PyQt, or Kivy in Python can help you design intuitive interfaces.

Example code snippet for a Tkinter-based GUI:

from tkinter import Tk, Label, Button, Entry, StringVar, messagebox

class FaceRecognitionApp:

def __init__(self, master):

self.master = master

master.title("Face Recognition App")

self.label = Label(master, text="Enter Your Name:")

self.label.grid(row=0, column=0, padx=10, pady=10)

self.name_entry = Entry(master)

self.name_entry.grid(row=0, column=1, padx=10, pady=10)

self.collect_button = Button(master, text="Collect Samples", command=self.collect_samples)

self.collect_button.grid(row=1, column=0, columnspan=2, pady=10)

self.train_button = Button(master, text="Train Model", command=self.train_model)

self.train_button.grid(row=2, column=0, columnspan=2, pady=10)

self.recognize_button = Button(master, text="Recognize Faces", command=self.recognize_faces)

self.recognize_button.grid(row=3, column=0, columnspan=2, pady=10)

self.status_var = StringVar()

self.status_label = Label(master, textvariable=self.status_var, fg="blue")

self.status_label.grid(row=4, column=0, columnspan=2, pady=10)

def collect_samples(self):

name = self.name_entry.get().strip()

if not name:

messagebox.showerror("Input Error", "Please enter your name.")

else:

self.status_var.set("Collecting samples for " + name + "...")

collect_face_samples(name)

self.status_var.set("Samples collected for " + name + ".")

def train_model(self):

self.status_var.set("Training the model, please wait...")

self.model = train_face_recognition()

self.status_var.set("Model trained successfully!")

def recognize_faces(self):

name = self.name_entry.get().strip()

if not name:

messagebox.showerror("Input Error", "Please enter your name.")

else:

self.status_var.set("Recognizing faces, please wait...")

recognize_face(self.model, name)

self.status_var.set("Recognition complete for " + name + ".")

def collect_face_samples(name, data_path='D://Projects//Face-Detection-using-OpenCV//image_samples'):

cap = cv2.VideoCapture(0)

count = 0

while True:

ret, frame = cap.read()

detected_face = face_extractor(frame)

if detected_face is not None:

count += 1

face = cv2.resize(detected_face, (200, 200))

face = cv2.cvtColor(face, cv2.COLOR_BGR2GRAY)

file_name_path = os.path.join(data_path, f'{name}_image{count}.jpg')

cv2.imwrite(file_name_path, face)

cv2.putText(face, str(count), (50, 50), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 255, 0), 2)

cv2.imshow('Face Cropper', face)

else:

print("Face not found")

pass

if cv2.waitKey(1) == 13 or count == 100:

break

cap.release()

cv2.destroyAllWindows()

print("Collecting Samples Complete")

def train_face_recognition(data_path='D://Projects//Face-Detection-using-OpenCV//image_samples'):

onlyfiles = [f for f in os.listdir(data_path) if os.path.isfile(os.path.join(data_path, f))]

Training_Data, Labels = [], []

for i, file in enumerate(onlyfiles):

image_path = os.path.join(data_path, file)

print("Loading image:", image_path)

images = cv2.imread(image_path, cv2.IMREAD_GRAYSCALE)

if images is not None:

Training_Data.append(np.asarray(images, dtype=np.uint8))

Labels.append(i)

print("Image loaded successfully.")

else:

print("Error loading image:", image_path)

print("Number of training images loaded:", len(Training_Data))

Labels = np.asarray(Labels, dtype=np.int32)

model = cv2.face.LBPHFaceRecognizer_create()

model.train(np.asarray(Training_Data), np.asarray(Labels))

print("Model trained successfully")

return model

def recognize_face(model, name):

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

image, face = face_detector(frame)

try:

face = cv2.cvtColor(face, cv2.COLOR_BGR2GRAY)

results = model.predict(face)

print(results)

if results[1] < 500:

confidence = int(100 * (1 - (results[1])/400))

display_string = f'{confidence}% Confident it is {name}'

cv2.putText(image, display_string, (100, 120), cv2.FONT_HERSHEY_COMPLEX, 1, (255, 120, 150), 2)

if confidence > 80:

cv2.putText(image, f'Hey {name}', (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 255, 0), 2)

cv2.imshow('Face Recognition', image )

else:

cv2.putText(image, "I don't know", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('Face Recognition', image )

except Exception as e:

print(f"Error Recognising Face: {e}")

cv2.putText(image, "Error Recognising Face", (130, 120), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.putText(image, "Locked", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('Face Recognition', image )

pass

if cv2.waitKey(1) == 13:

break

cap.release()

cv2.destroyAllWindows()

root = Tk()

app = FaceRecognitionApp(root)

root.mainloop()

2. Database Integration:

Integrating a database into your face recognition system can enhance security, user management, and data storage. Here's a brief overview and a reference for database integration:

SQLite Database: SQLite is a lightweight, file-based database that can be easily integrated into Python applications. It's suitable for small to medium-sized projects.

MySQL or PostgreSQL: For larger projects, consider using more robust databases like MySQL or PostgreSQL. Libraries such as mysql-connector-python or psycopg2 can facilitate the integration.

These enhancements can add a layer of sophistication and functionality to your face recognition system, making it adaptable to various use cases and scenarios.

Conclusion

In this blog, we've explored the implementation of a face recognition system using OpenCV and machine learning. The provided code captures face samples, trains a model, and performs real-time face recognition. By following the steps outlined and customizing the code, you can create a versatile and robust face recognition system tailored to your specific needs.

-

GitHub Repository Link:

Face Recognition System GitHub Repository

Feel free to contribute or raise issues if you encounter any challenges during implementation.

Happy coding!

from tkinter import Tk, Label, Button, Entry, StringVar, messagebox

class FaceRecognitionApp:

def __init__(self, master):

self.master = master

master.title("Face Recognition App")

self.label = Label(master, text="Enter Your Name:")

self.label.grid(row=0, column=0, padx=10, pady=10)

self.name_entry = Entry(master)

self.name_entry.grid(row=0, column=1, padx=10, pady=10)

self.collect_button = Button(master, text="Collect Samples", command=self.collect_samples)

self.collect_button.grid(row=1, column=0, columnspan=2, pady=10)

self.train_button = Button(master, text="Train Model", command=self.train_model)

self.train_button.grid(row=2, column=0, columnspan=2, pady=10)

self.recognize_button = Button(master, text="Recognize Faces", command=self.recognize_faces)

self.recognize_button.grid(row=3, column=0, columnspan=2, pady=10)

self.status_var = StringVar()

self.status_label = Label(master, textvariable=self.status_var, fg="blue")

self.status_label.grid(row=4, column=0, columnspan=2, pady=10)

def collect_samples(self):

name = self.name_entry.get().strip()

if not name:

messagebox.showerror("Input Error", "Please enter your name.")

else:

self.status_var.set("Collecting samples for " + name + "...")

collect_face_samples(name)

self.status_var.set("Samples collected for " + name + ".")

def train_model(self):

self.status_var.set("Training the model, please wait...")

self.model = train_face_recognition()

self.status_var.set("Model trained successfully!")

def recognize_faces(self):

name = self.name_entry.get().strip()

if not name:

messagebox.showerror("Input Error", "Please enter your name.")

else:

self.status_var.set("Recognizing faces, please wait...")

recognize_face(self.model, name)

self.status_var.set("Recognition complete for " + name + ".")

def collect_face_samples(name, data_path='D://Projects//Face-Detection-using-OpenCV//image_samples'):

cap = cv2.VideoCapture(0)

count = 0

while True:

ret, frame = cap.read()

detected_face = face_extractor(frame)

if detected_face is not None:

count += 1

face = cv2.resize(detected_face, (200, 200))

face = cv2.cvtColor(face, cv2.COLOR_BGR2GRAY)

file_name_path = os.path.join(data_path, f'{name}_image{count}.jpg')

cv2.imwrite(file_name_path, face)

cv2.putText(face, str(count), (50, 50), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 255, 0), 2)

cv2.imshow('Face Cropper', face)

else:

print("Face not found")

pass

if cv2.waitKey(1) == 13 or count == 100:

break

cap.release()

cv2.destroyAllWindows()

print("Collecting Samples Complete")

def train_face_recognition(data_path='D://Projects//Face-Detection-using-OpenCV//image_samples'):

onlyfiles = [f for f in os.listdir(data_path) if os.path.isfile(os.path.join(data_path, f))]

Training_Data, Labels = [], []

for i, file in enumerate(onlyfiles):

image_path = os.path.join(data_path, file)

print("Loading image:", image_path)

images = cv2.imread(image_path, cv2.IMREAD_GRAYSCALE)

if images is not None:

Training_Data.append(np.asarray(images, dtype=np.uint8))

Labels.append(i)

print("Image loaded successfully.")

else:

print("Error loading image:", image_path)

print("Number of training images loaded:", len(Training_Data))

Labels = np.asarray(Labels, dtype=np.int32)

model = cv2.face.LBPHFaceRecognizer_create()

model.train(np.asarray(Training_Data), np.asarray(Labels))

print("Model trained successfully")

return model

def recognize_face(model, name):

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

image, face = face_detector(frame)

try:

face = cv2.cvtColor(face, cv2.COLOR_BGR2GRAY)

results = model.predict(face)

print(results)

if results[1] < 500:

confidence = int(100 * (1 - (results[1])/400))

display_string = f'{confidence}% Confident it is {name}'

cv2.putText(image, display_string, (100, 120), cv2.FONT_HERSHEY_COMPLEX, 1, (255, 120, 150), 2)

if confidence > 80:

cv2.putText(image, f'Hey {name}', (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 255, 0), 2)

cv2.imshow('Face Recognition', image )

else:

cv2.putText(image, "I don't know", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('Face Recognition', image )

except Exception as e:

print(f"Error Recognising Face: {e}")

cv2.putText(image, "Error Recognising Face", (130, 120), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.putText(image, "Locked", (250, 450), cv2.FONT_HERSHEY_COMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('Face Recognition', image )

pass

if cv2.waitKey(1) == 13:

break

cap.release()

cv2.destroyAllWindows()

root = Tk()

app = FaceRecognitionApp(root)

root.mainloop()